Which AI intranet search tool is right for your enterprise?

The wrong platform choice is usually a governance problem in disguise. Here's how to diagnose your real constraint before shortlisting vendors.

The short answer

Here's how to pick the right AI intranet search tool for your organization:

Choose an intranet platform with built-in AI if your primary challenge is delivering trusted answers from governed company knowledge to all employees, including frontline workers, because the only way to control what the AI surfaces is to govern what it can access.

Choose a standalone enterprise search platform if your knowledge is scattered across many SaaS tools and your primary problem is cross-system discoverability.

Choose Microsoft Copilot if your workforce is fully licensed on Microsoft 365 and your need is AI-assisted productivity within those tools rather than a governed employee knowledge experience.

Most large enterprises will eventually need more than one of these solutions. The question is which to prioritize first. That decision depends entirely on what problem is actually costing your organization the most.

Why most AI intranet search decisions fail before they start

Most enterprise AI search evaluations are run backwards. Organizations compare feature lists, request demos, score vendors, and select a platform without first asking whether their content is ready for AI to retrieve and trust. The platform decision is the middle step, but it keeps getting treated as the first one.

No platform category can compensate for ungoverned content at scale. A built-in AI assistant surfaces stale policies if no one owns the content. A standalone search platform indexes outdated documents if the connected systems are not maintained. Microsoft Copilot generates answers from whatever lives in SharePoint, including everything that was never meant to be authoritative.

The governance decision comes first. The platform decision comes second. Understanding which platform category fits your environment is only useful once you know what governance infrastructure you are willing to build and maintain underneath it.

What problem does each AI intranet search approach actually solve?

The three main categories of AI intranet search are not interchangeable. Each one solves a different primary problem, and choosing the wrong category for the wrong problem is the most common reason these deployments underperform.

1. Built-in intranet AI: when governed knowledge delivery is the primary constraint

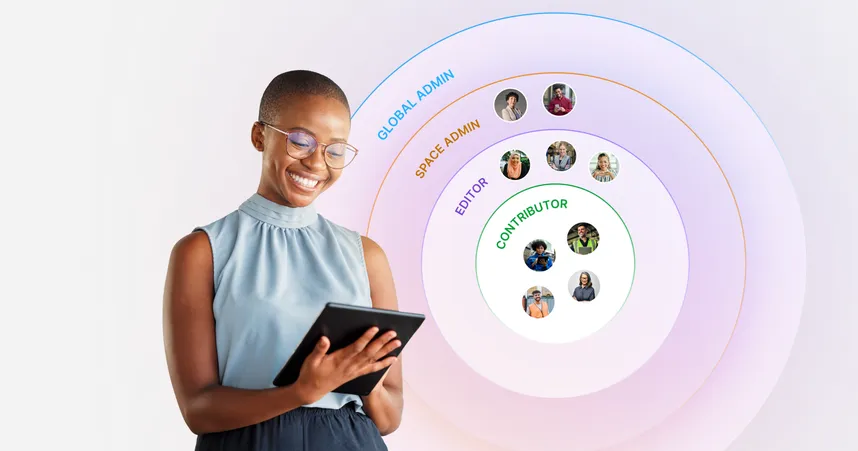

These are employee experience platforms that have integrated AI search directly into the intranet, where employees already access company information. The AI retrieves answers from the intranet's own governed content, and the governance infrastructure (publishing workflows, content ownership, permissions, lifecycle management) is built into the same platform that manages the content itself.

Where this approach wins: The AI and the content governance operate within one system. Content lifecycle rules — such as published vs. draft, current vs. archived, HR-restricted vs. company-wide — are automatically enforced at the retrieval layer. For frontline employees without corporate laptops or Microsoft licenses, mobile-first AI search through an employee app is accessible without requiring additional licensing or complex identity management.

Where this approach has limits: Built-in AI retrieves answers from the intranet's own governed content. If your organization's knowledge lives primarily in other systems, such as SharePoint, Confluence, or ServiceNow, a built-in approach requires either migrating that content into the intranet or building integrations to connect it. For organizations with very fragmented tool ecosystems, this integration work can be significant.

Choose this approach if: Your primary goal is ensuring all employees, including desk-based and frontline, receive trusted answers from governed company knowledge, and you are willing to invest in content governance as a prerequisite. This is the Trusted Front Door model: one place where authoritative knowledge lives, and one place where AI retrieves it.

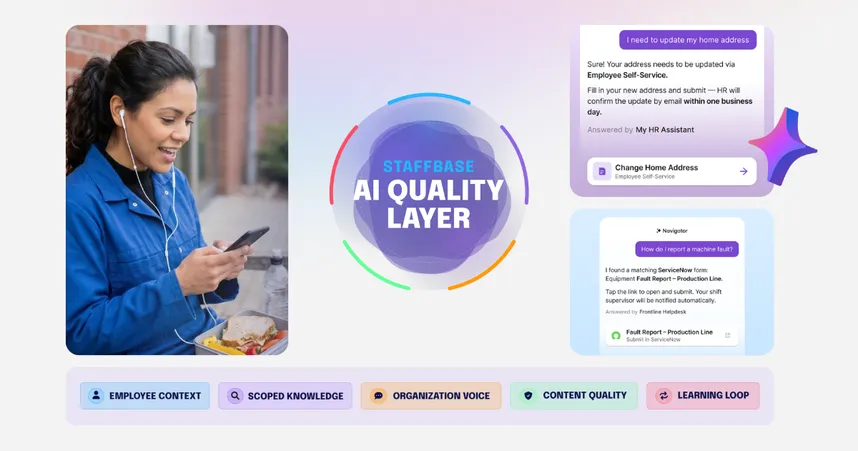

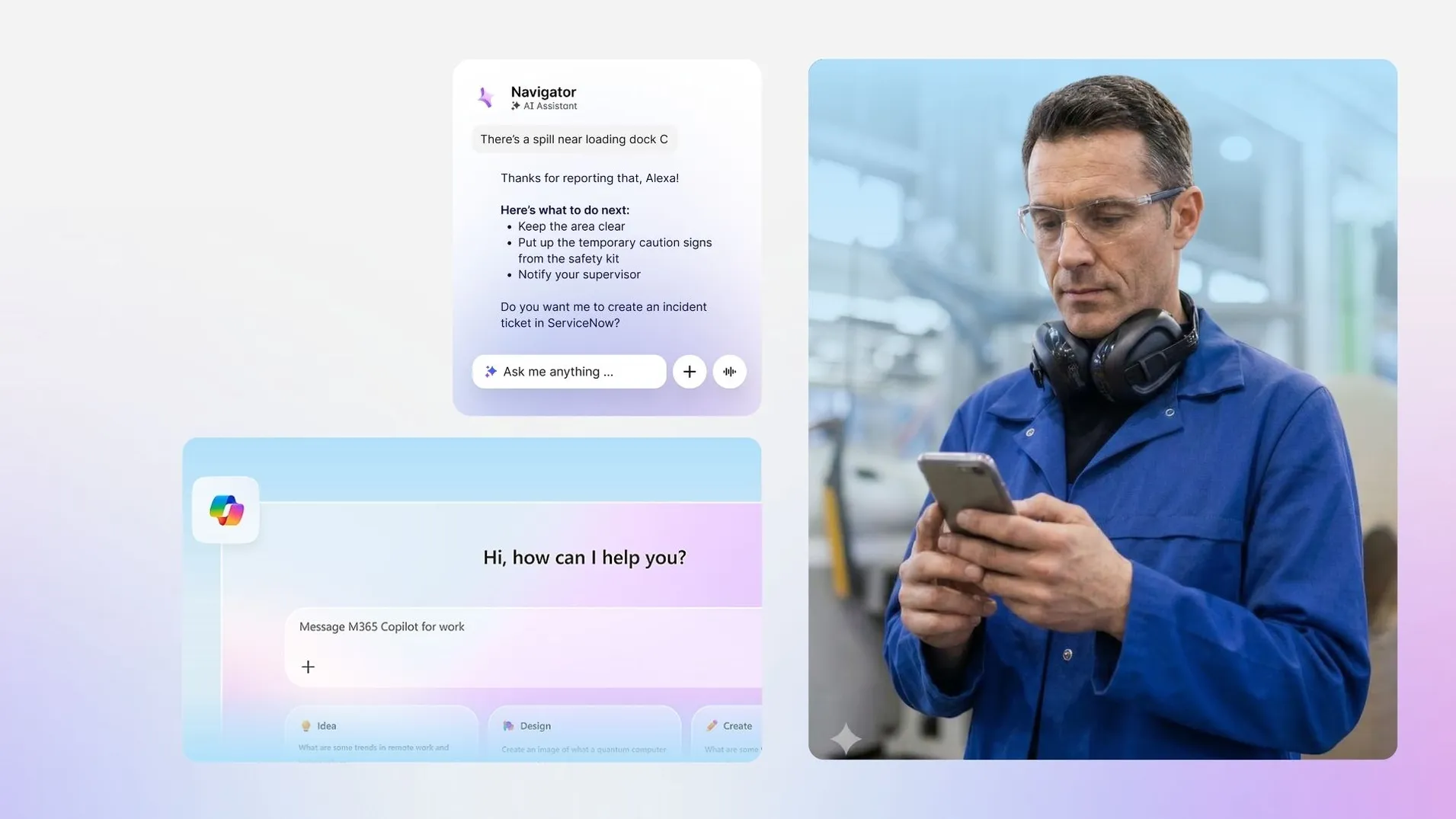

Staffbase is an example of this approach. Its AI assistant Navigator retrieves answers from governed intranet content, supports multilingual queries, and delivers source-backed responses through both desktop and mobile interfaces. The platform's governance tools — content ownership tracking, review cycles, search analytics — feed directly into what the AI can retrieve.

Enterprises use Staffbase as the governed communications and knowledge layer in front of broader Microsoft environments — benefiting from deep SharePoint and Teams integration while maintaining a single, trusted employee-facing front door. For organizations where frontline reach and content governance are the primary constraints, this integration is the structural differentiator.

2. Standalone enterprise search: when cross-system discoverability is the primary constraint

These are dedicated search products designed to connect across many enterprise systems simultaneously, including intranets, document repositories, chat platforms, ticketing systems, CRM tools, HR systems, and more. They build a cross-system index and provide a single AI-powered search interface that spans the entire tool ecosystem.

Where this approach wins: Breadth of integration. If an employee needs to find a document in SharePoint, a contract in Salesforce, or a ticket in ServiceNow, standalone enterprise search platforms retrieve across all of them from a single query. For organizations where knowledge fragmentation across many SaaS tools is the primary pain, this is the most direct solution.

Where this approach has limits: Answer quality depends entirely on the governance practices of each connected source system. A standalone search platform cannot govern SharePoint content on behalf of the SharePoint owner. It indexes whatever exists. In practice, trust often falls to the quality of the least-governed connected source — meaning the platform is only as reliable as the weakest system it connects to.

Choose this approach if: Your knowledge is genuinely distributed across many enterprise systems, your primary problem is cross-system discoverability, and you have governance practices in place within each source system that make the indexed content reliable.

Glean is a widely used example. It connects to 100+ enterprise applications, builds a company-specific knowledge graph, and generates AI-powered answers from indexed content. Its strength is cross-system discovery. Its trade-off is that it cannot enforce content governance within the systems it connects to, since that responsibility stays with each source.

3. Microsoft Copilot: when AI productivity within Microsoft 365 is the primary need

Microsoft Copilot for Microsoft 365 embeds AI assistance directly into productivity tools like Word, Teams, Outlook, SharePoint, and OneDrive using the Microsoft Graph as its knowledge layer. It's less a standalone search platform than an AI layer woven into the tools employees already use for daily work.

Where this approach wins: For organizations deeply invested in Microsoft 365, Copilot provides AI assistance in the context where work happens. Copilot supports tasks like drafting documents, summarizing Teams meetings, and finding email threads without requiring employees to switch to a separate interface.

Where this approach has limits: Copilot operates within Microsoft's ecosystem. Organizations that need to reach frontline employees without Microsoft 365 licenses, which is common in manufacturing, logistics, retail, and healthcare, face a structural gap from the start. SharePoint is strong for storage and collaboration, but many enterprises find it does not, on its own, provide the governed, personalized employee knowledge experience they need at scale. The governance challenges of SharePoint do not disappear when Copilot is connected to it.

Choose this approach if: Your workforce is fully licensed on Microsoft 365, your primary need is AI assistance within Microsoft productivity tools, and you are not trying to solve frontline reach or build a governed employee knowledge destination.

Many large enterprises use Microsoft Copilot alongside a dedicated intranet platform rather than instead of one. Copilot handles AI-assisted productivity within Microsoft tools. The intranet platform handles governed knowledge delivery and frontline reach.

How the three approaches compare

Built-in intranet AI | Standalone enterprise search | Microsoft Copilot | |

|---|---|---|---|

Primary problem solved | Governed knowledge delivery to all employees | Cross-system discoverability across many SaaS tools | AI productivity assistance within Microsoft 365 |

Governance model | Enforced within the platform | Depends on the governance of each connected source system | Depends on Microsoft 365 content governance |

Frontline reach | Yes; mobile-first, no M365 license required | Varies by implementation | Limited; requires M365 licensing |

Integration complexity | Lower for intranet-first organizations | Higher; connects many external systems | Low for M365-native organizations |

Key trade-off | Requires integration work for knowledge outside the intranet | Answer quality reflects governance of source systems | Does not serve frontline employees or replace a governed intranet |

When is a Microsoft-first AI search strategy actually the right choice?

IT leaders often default to Microsoft-first approaches for AI intranet search, a choice rarely made because of inertia. Microsoft is already licensed, integrated with identity management, and approved through security review. Adding another platform means another governance conversation, another security audit, and another contract.

The Microsoft-first preference is justified when all three of these are true:

Your entire workforce is desk-based

Every employee is fully licensed on Microsoft 365

Your primary need is AI-assisted productivity within existing tools, not governed knowledge delivery to the full workforce

It breaks down when a significant proportion of the workforce is frontline or non-desk. In manufacturing, logistics, retail, and healthcare, that proportion is typically the majority, not the exception. Microsoft 365 licensing was not designed for employees without corporate email addresses or laptops. Extending Copilot to frontline workers means one of two outcomes:

Option | Reality |

|---|---|

Purchase additional M365 licensing for frontline workers | Expensive, often impractical at scale |

Accept the exclusion | The employees with the most urgent need for fast answers (shift leads, nurses, field technicians) are the ones the system cannot reach |

The frame that resolves IT's concern: a governed intranet platform and Microsoft Copilot are not competitors. Copilot handles AI-assisted productivity for licensed desk workers. The intranet platform handles governed knowledge delivery for the entire workforce. Most large enterprises need both, and IT can govern both without compromising the Microsoft investment.

Why standalone search often fails Comms teams

Internal communications teams are often the strongest advocates for AI intranet search, and they also tend to be the most frustrated when standalone enterprise search is deployed instead. The reason is structural.

The core tension:

Standalone enterprise search | Built-in intranet AI | |

|---|---|---|

Indexing model | Everything it can reach across all connected systems | Only published, governed intranet content |

Comms control | Lost; AI may surface any version of any content | Maintained; what Comms approves is what AI retrieves |

Risk | Outdated drafts, superseded guidance, or off-message content surfaces alongside current material | Stale content only surfaces if Comms failed to govern it |

Best for | Cross-system discoverability | Narrative consistency and message accuracy |

Standalone search's breadth, indexing many connected systems simultaneously, is exactly what undermines Comms teams. When AI retrieves from across the entire enterprise ecosystem, the team loses the ability to guarantee that what employees see is the current version, approved for their region or role, and consistent with the intended message.

Built-in intranet AI operates on a publication model, not a retrieval model. Only reviewed, approved, and published content can be retrieved. For Comms teams responsible for narrative consistency and message accuracy, this is the only model that works at scale. AI surfaces the knowledge base Comms has already built, at the moment of employee need, rather than competing with everything else in the enterprise ecosystem for relevance.

Why HR sees AI search as a liability risk (and how that risk is actually managed)

HR leaders worry about employees making consequential decisions about benefits, parental leave, compliance obligations, or disciplinary processes based on a confident AI answer that turns out to be wrong. Unlike a bad search result, which an employee can assess and discard, a confident AI answer carries implied authority. The concern is legitimate.

The risk is managed through two mechanisms:

1. Source links on every answer

Every well-implemented AI intranet search response includes links to the source documents. The AI provides speed and synthesis. The source link provides accountability. Employees can verify before acting, and the organization retains an auditable trail of what was surfaced and from where.

2. Governance is the real accuracy control

Content state | AI behavior |

|---|---|

Policy pages are owned and reviewed on a clear cycle, with no conflicting guidance | AI answers accurately |

Pages outdated, duplicated, or unowned | AI surfaces the wrong answer confidently* |

*This is not a failure of the AI. It is AI making visible what was already broken in the content layer.

The counterintuitive implication for HR: AI intranet search does not introduce new accuracy risk. It makes existing accuracy risk impossible to ignore. An outdated benefits page that sat unread for three years becomes a live governance problem the moment AI starts citing it in employee answers. Organizations that treat this as a reason to delay deployment are deferring a governance investment they needed to make anyway. The AI did not create the problem. It removed the ability to pretend the problem did not exist.

Before you shortlist a platform, answer these five questions

Most platform decisions stall late, pausing for security review, budget approval, or when IT realizes the chosen tool conflicts with an existing Microsoft agreement. These five questions surface those blockers before they cost time.

1. Where does your knowledge live today?

Situation | Implication |

|---|---|

Most knowledge lives in one governed intranet | Built-in intranet AI: avoids integration complexity |

Knowledge scattered across 10+ SaaS tools | Standalone enterprise search: cross-system discoverability is the real problem |

Both | Start with the governed intranet as the destination; add a standalone search as a secondary layer |

2. What proportion of your workforce is frontline or non-desk?

This single question often determines the project before any demo is run.

If most employees have corporate laptops and M365 licenses → Microsoft Copilot or built-in intranet AI are both viable.

If a significant share are frontline without corporate email or laptops → Any platform requiring M365 licensing creates a structural exclusion. Built-in intranet AI with a mobile employee app is the only approach that reaches the full workforce.

3. Who owns your intranet content today?

This is the question most organizations answer dishonestly, although it determines the project timeline more than any platform decision. Ask specifically: For each major content area (HR policies, IT guides, operational procedures), is there a named person responsible for accuracy, and when was it last reviewed?

If yes for most content → You are ready for AI deployment.

If no for most content → No platform produces trustworthy answers until that changes; governance work comes before platform selection.

4. Is your primary problem finding information or resolving questions?

Problem type | What it looks like | Right approach |

|---|---|---|

Finding information | "I know the answer exists somewhere — I just can't locate it across our tools." | Standalone enterprise search |

Resolving questions | "Employees ask the same questions repeatedly but can't get to the answers fast enough." | Built-in intranet AI |

5. What is your IT governance model for AI?

Some organizations require all AI systems to operate within existing IT-managed platforms. Knowing this prevents a common failure mode: selecting the best-fit platform, then losing six months to a security review that was always going to block it. Ask IT directly: Does a new AI platform require a net-new security review, or can it be evaluated under an existing framework?

What your answers tell you:

Dominant answer pattern | Recommended starting point |

|---|---|

Knowledge in one intranet + frontline workforce + content is governed | Built-in intranet AI |

Knowledge fragmented across many SaaS tools + desk-based workforce | Standalone enterprise search |

Fully M365-licensed desk workforce + no frontline reach requirement | Microsoft Copilot |

Mixed workforce + ungoverned content | Governance program first, platform decision second |

When built-in and standalone approaches are complementary rather than competing

The sharpest division in this market is not between vendors. It's between organizations that have invested in content governance and those that have not.

For organizations with well-governed intranets, built-in AI delivers immediate value because the foundation is already there. For organizations where knowledge is sprawled across many SaaS tools is the primary problem, standalone enterprise search addresses the real constraint. Many large enterprises eventually use both: a governed intranet as the primary employee knowledge destination, with a standalone search layer for cross-system discovery where employees need to pull from a broader tool ecosystem. The intranet becomes the Trusted Front Door, making it the single source of authoritative knowledge. The enterprise search layer handles everything else.

Staffbase integrates with Microsoft 365, SharePoint, ServiceNow, and other enterprise systems, allowing organizations to bring content from those systems into a governed intranet experience without abandoning existing tool investments. For organizations where frontline reach and content governance are the primary constraints, this model, with the governed intranet as the destination and enterprise tools as connected sources, tends to produce stronger AI answer quality than connecting AI directly to unmanaged source systems.

When this framework is overkill

This comparison is designed for large, distributed enterprises with complex knowledge environments and significant frontline workforces. If your organization has fewer than 500 employees, limited frontline complexity, and most knowledge already lives in one well-maintained system, simpler search improvements and better information architecture may be the right investment before AI search. The governance overhead of any platform in this comparison is likely to exceed the benefit at that scale.

Before you move to implementation

Selecting the right platform is the middle decision, not the final one. The decision before it is governance readiness: Is your content owned, reviewed, and structured well enough for AI to retrieve it reliably? The decision after it is rollout strategy: How do you deploy AI search in a way that builds employee trust rather than eroding it with wrong answers early?

See how Staffbase supports AI intranet search.

This answer reflects the Staffbase perspective based on enterprise customer experience and market research available as of March 2026. Platform capabilities change. Validate current feature sets directly with vendors during evaluation. This comparison assumes a large, distributed enterprise environment with a significant proportion of frontline or non-desk employees.