The AI Quality Layer: How to make sure AI actually works for every employee

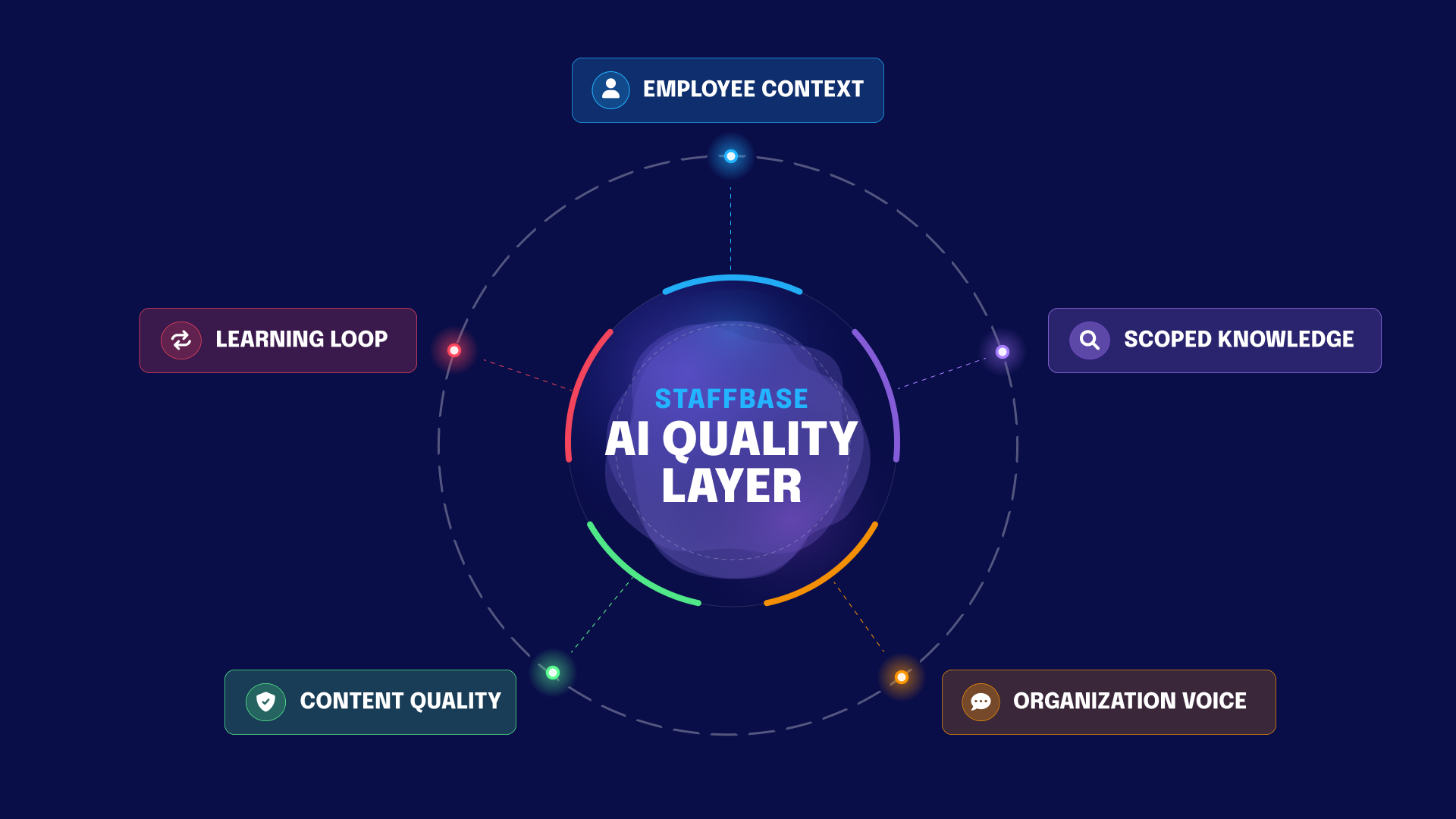

AI security is table stakes. Making every answer accurate, contextual, and trustworthy is the harder problem. The AI Quality Layer addresses it through five interconnected dimensions.

Key insights

The industry has focused on making AI safe (data controls, permissions, compliance), but safe AI can still produce answers that are only almost right — which at work means wrong. The Staffbase AI Quality Layer addresses this with five dimensions: knowing who is asking (Employee Context), searching only relevant content (Scoped Knowledge), sounding like your company (Organization Voice), keeping source content clean and current (Content Quality), and improving over time through feedback (Learning Loop).

Crucially, these aren’t bolt-on features — they’re deeply embedded across the entire Staffbase Employee Experience Platform, shaping every AI-generated interaction: intranet pages, the employee app, AI assistants, real-time translations, AI-assisted content creation, and more. The same quality standard applies everywhere, for every employee.

The goal: AI that’s not just safe enough to use, but good enough to trust at scale.

What’s the difference between safe AI and good AI?

The enterprise AI conversation has followed a predictable arc. First came the excitement over incredible models and seemingly limitless possibilities. But when the newness wore off, uncertainty and fear took root. What about data, compliance, and risk exposure? And from that anxiety, an entire governance industry emerged around a single question: Is the AI safe?

Data access controls. Permission boundaries. Compliance frameworks. Sensitivity labels. Ring-fenced environments. Every major platform is designed to ensure the AI doesn’t access unauthorized content, leak confidential knowledge, or expose protected information.

This matters. No organization should deploy AI without it. But you can have every permission locked, data boundary in place, and compliance box checked — and the AI still produces almost right output. A translation that’s technically accurate but sounds nothing like your company. A policy answer that’s correct as of last month but fails to reference a version that was updated last week. A briefing that misses the context that makes it useful for this specific team.

Almost right. Not wrong enough to catch immediately. Not right enough to act on safely.

Or as Staffbase CEO Martin Böhringer put it at VOICES 2026:

“Almost right is wrong at work. In your personal life, almost right is fine. At work, almost right creates risk. It erodes trust. It makes people stop using the tool altogether.”

Martin Böhringer, CEO Staffbase

That’s because the industry has been focused on governing what goes into the AI. Hardly anybody is asking the harder question: Is what comes out actually good?

Is the answer accurate? Does it sound like your organization? Can the employee verify where the information came from? Can the company stand behind everything the AI produces on its behalf?

ClearBox Consulting founder Sam Marshall compared governance to the brakes of a car in a 2017 blog post, quoting Ralph O’Brien:

“Good governance is like having good brakes on a car — it makes it safer to go faster.”

Ralph O’Brien

This analogy is even more relevant in the age of AI. But brakes alone don’t get you anywhere. You need the engine, steering, and navigation, too. You need safety and quality.

That’s why we built the AI Quality Layer.

What is the AI Quality Layer?

The AI Quality Layer is the system between the AI model and the employee. It encompasses the checks, context, and controls that verify what the AI produces is actually trustworthy before it reaches anyone.

It is not a single feature, but rather five interconnected dimensions that work together across every AI-powered interaction in Staffbase. This covers everything from Navigator answers to town halls translated into native languages to AI-generated briefings and AI-assisted content creation. Three of these dimensions shape every request in real time. Two ensure the system improves continuously over time.

The name is deliberate. Not AI Governance Layer, because governance is what the industry is already building for. Not AI Trust Layer, because trust is an outcome, not a mechanism. Quality names the intent — make sure what comes out is genuinely good. Trust follows.

The safety foundation: AI Trust Hub

Before quality comes safety. At Staffbase, the AI Trust Hub provides centralized control over every AI feature in the platform. Administrators can see exactly where and how AI is applied, enable or disable AI capabilities per feature, and control what data the AI can access.

Our AI approach is based on the principles of T.R.U.S.T. — Transparency, Responsibility, User-in-the-loop, Security, Traceability. They are embedded in how the platform operates:

All data processing runs on Microsoft Azure.

Customer content is never used to train models.

Prompts and outputs are encrypted, not shared with other customers, and not accessible to third parties.

Additionally, AI respects existing content permissions, meaning users can only generate content based on information they already have access to. This is essential, but it’s also table stakes. Every serious platform should offer this. The question is what happens next. Once the AI is safe, how do you make sure it’s actually good?

That’s where the five dimensions come in.

Dimension | The question it answers | What it controls |

|---|---|---|

Employee Context | Who is asking? | Role, location, language, and history shape every answer |

Scoped Knowledge | What should the AI draw from? | Purpose-built assistants with curated, approved content |

Organization Voice | Does it sound like us? | Terminology, tone, and language rules set per space |

Content Quality | Is the source actually reliable? | Continuous monitoring, flagging, and cleanup of published content |

Learning Loop | Is the system getting better? | Feedback and analytics that improve answers over time |

1. Employee Context: How does AI know who it’s talking to?

AI that doesn’t know who it’s talking to can’t give a good answer.

Consider Lena, a technician who started at a manufacturing plant eight days ago. She’s on the floor, her uniform doesn’t fit right, and she needs to request a different size. She opens the company’s AI assistant on her phone and types a question. She has one shot to get this right. There’s no second screen to cross-reference or time to search through an intranet. She needs a fast answer she can trust and immediately act on.

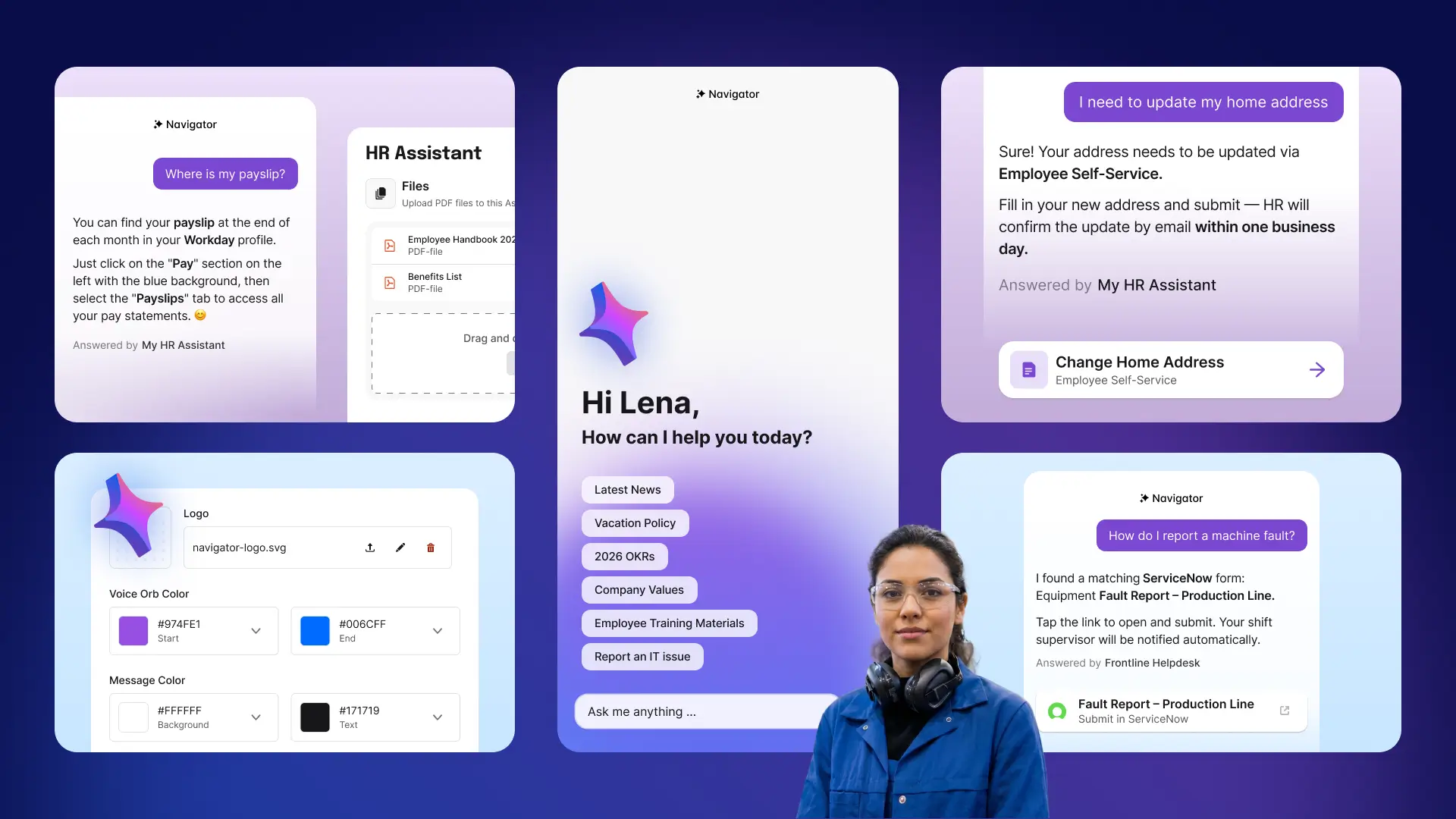

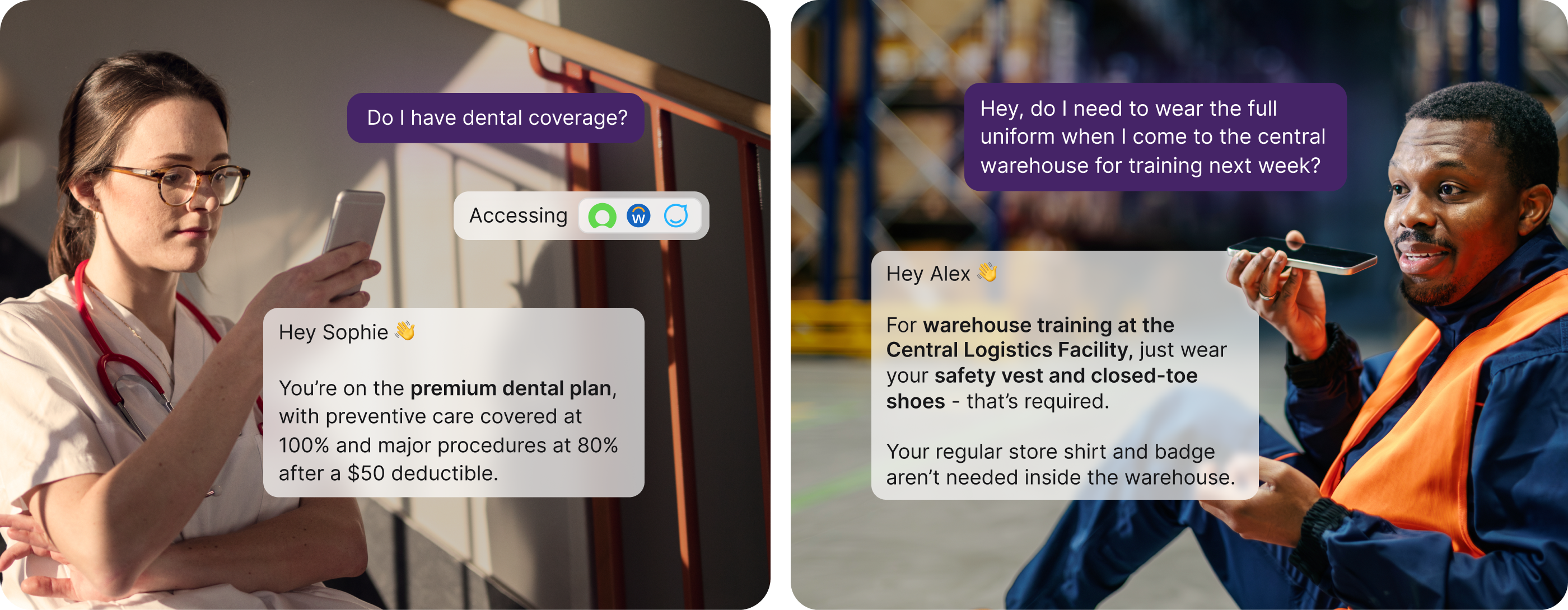

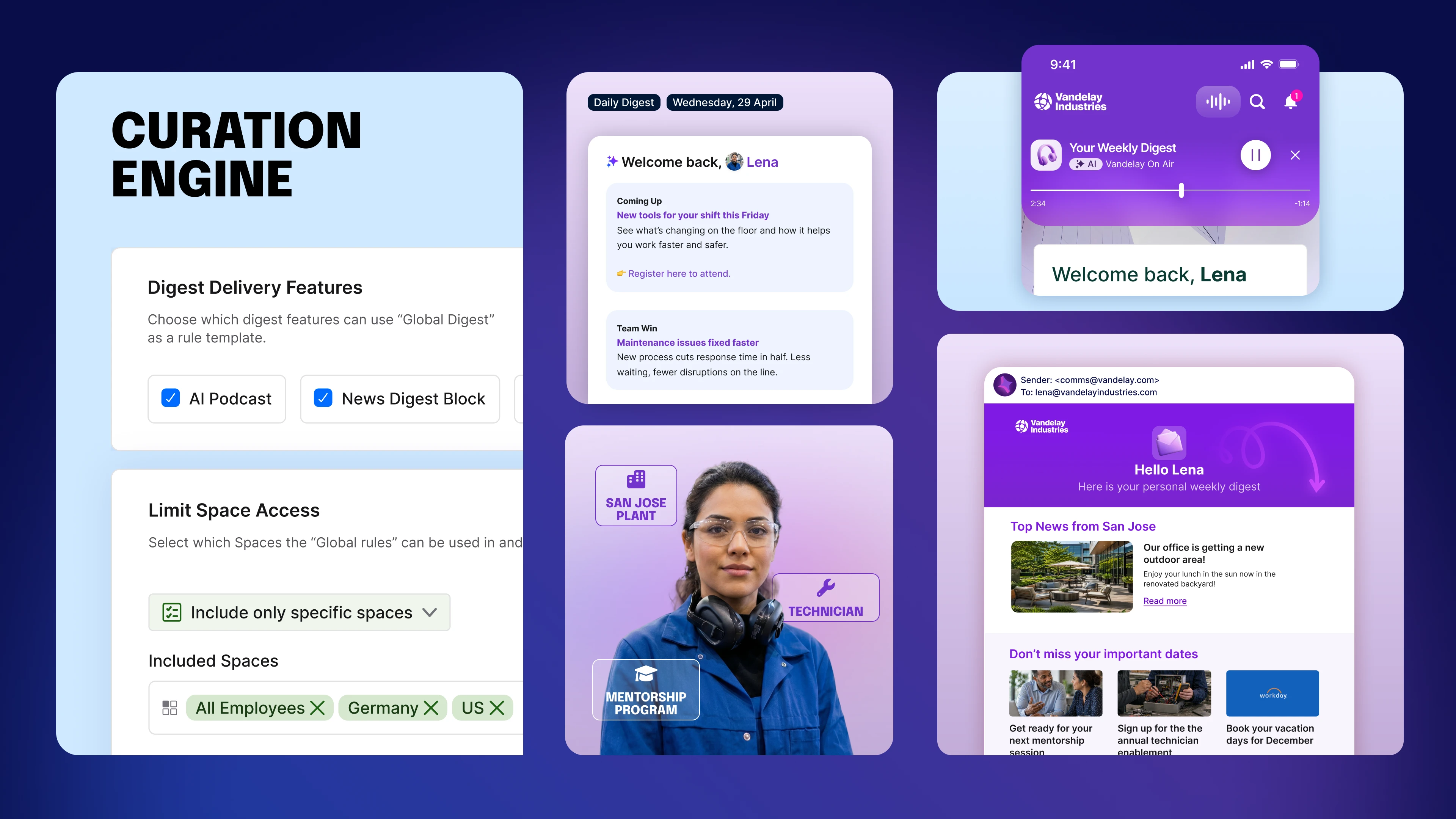

Employee Context means the system recognizes the person behind the question. It identifies individuals with specifics like role, location, team, and language. When Lena in Plant 3 in San Jose asks about a procedure, the AI knows it’s talking to a new technician at that specific facility, not a generic employee somewhere in the organization.

Personalization sharpens accuracy. The same question from a shift worker and a plant manager might require fundamentally different answers with varying details, scopes, and implications.

The Staffbase Quality Layer captures relevant context from previous interactions, so the system builds understanding over time. Answers get more relevant with use without compromising data privacy or crossing organizational boundaries.

2. Scoped Knowledge: Why should AI not search everything?

One of the most common failure modes in enterprise AI occurs when the model searches too broadly and returns a generic answer instead of a specific, authoritative one.

Every platform vendor talks about connecting AI to “all your enterprise knowledge.” We’ve learned the opposite produces better results. Connecting AI to the right knowledge — and only the right knowledge — is a quality mechanism, not a limitation.

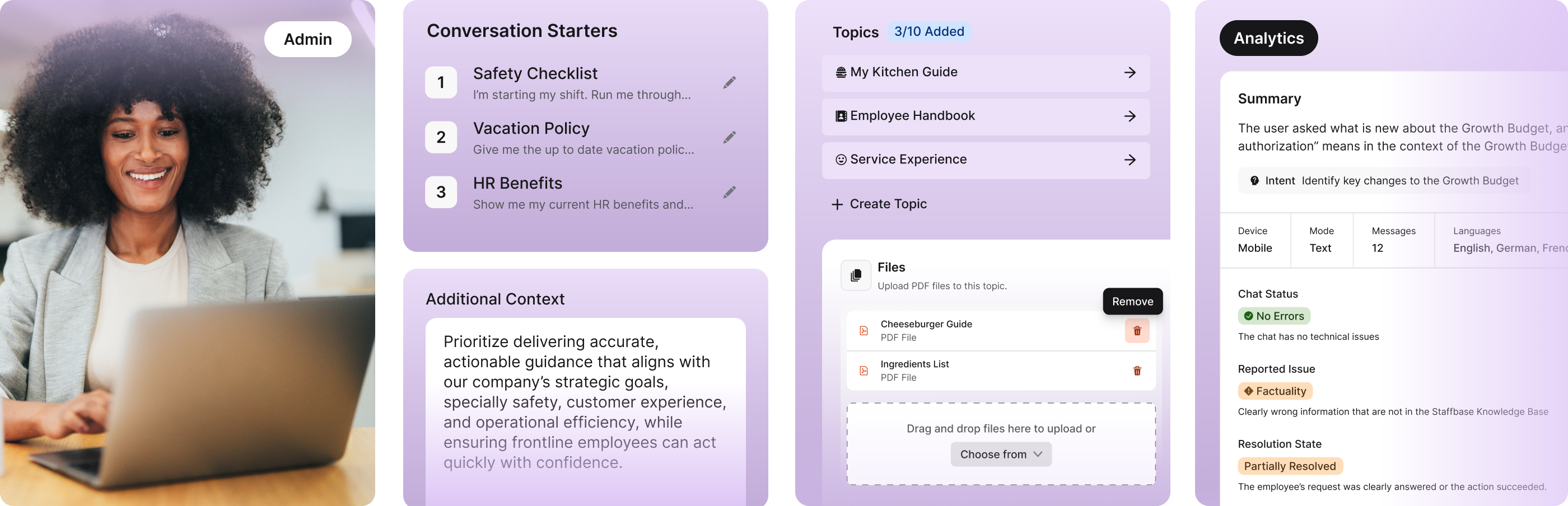

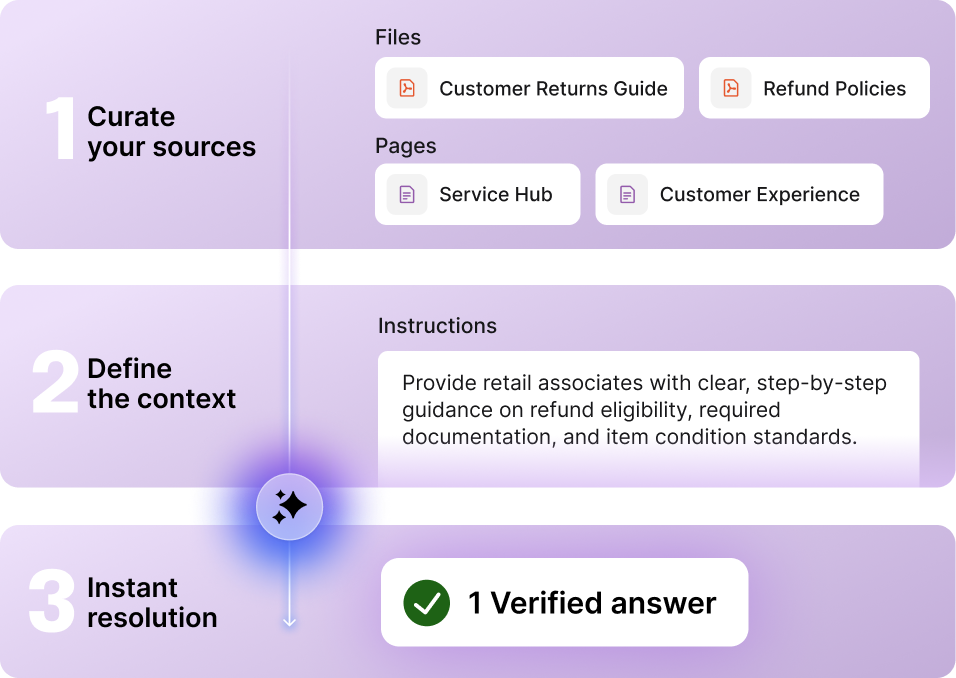

This is what Staffbase’s AI assistants architecture enables. Organizations create purpose-built assistants, each with a carefully Scoped Knowledge domain. A facility assistant for Plant 3. An HR assistant for the DACH region. An onboarding assistant for new hires in logistics. Each one draws from approved, curated content — and only that content.

The industry is catching on. ClearBox research shows vendors are moving from universal agents toward scoped, topic-specific ones, because nobody wants an AI answering leave policy questions based on someone’s comment in a social feed, or applying one region’s rules to another.

Staffbase Navigator connects to systems like ServiceNow, SharePoint, Workday, and Confluence. The goal is not to replace them, but to make their content accessible through one trusted interface. The scoping still applies: the AI knows which source to consult for which type of question.

3. Organization Voice: How do you make AI sound like your company?

A correct answer that sounds like it came from a generic chatbot is still a bad answer. Employees notice when AI output uses the wrong terminology, tone, and names for internal programs. Good AI must reflect an organization’s voice, because each inconsistency erodes trust in the system.

That’s why we created Organization Voice. It ensures every AI interaction — every answer, translation, and generated page — is aligned with how this specific company communicates. At Staffbase, this is defined through AI communication guidelines, our explicit language rules, tone and style settings, and glossary entries that ensure the AI uses the correct names, abbreviations, and terminology.

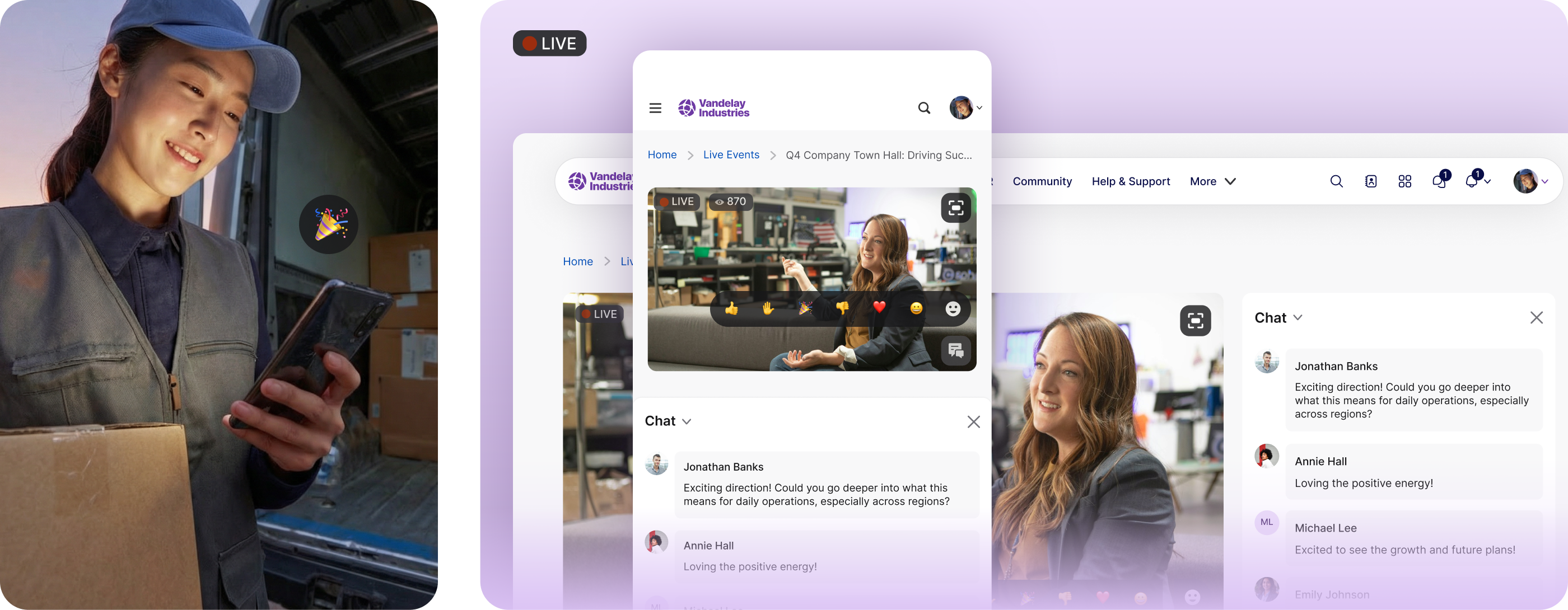

These guidelines are set per space, so different parts of the organization can maintain different voice profiles where needed. A manufacturing plant’s safety communications require a different register than the CEO’s town hall summary.

Organization Voice applies beyond chatbot answers. When a town hall is subtitled in real time across languages, the subtitles use the company’s established translations for key terms — not whatever a generic model would produce. When content is generated with AI assistance, it reads as if it belongs to this organization.

This is what makes the difference between AI that works for your organization and AI that just works in your organization.

4. Content Quality: What happens when AI draws from bad content?

This is the dimension that makes everything else work — or fail. The AI can only be as good as the content it draws from. If the underlying source is outdated, contradictory, or has no clear owner, the AI will confidently deliver a wrong answer.

And as ClearBox Sam founder Marshall noted, AI is now surfacing content problems that organizations ignored for years: organizations that never cared about governance are suddenly paying attention because AI turns bad content into visible business risks.

The problem is scale. Large organizations accumulate content debt the same way they accumulate technical debt. Manual review processes, as ClearBox’s research confirms, seldom succeed.

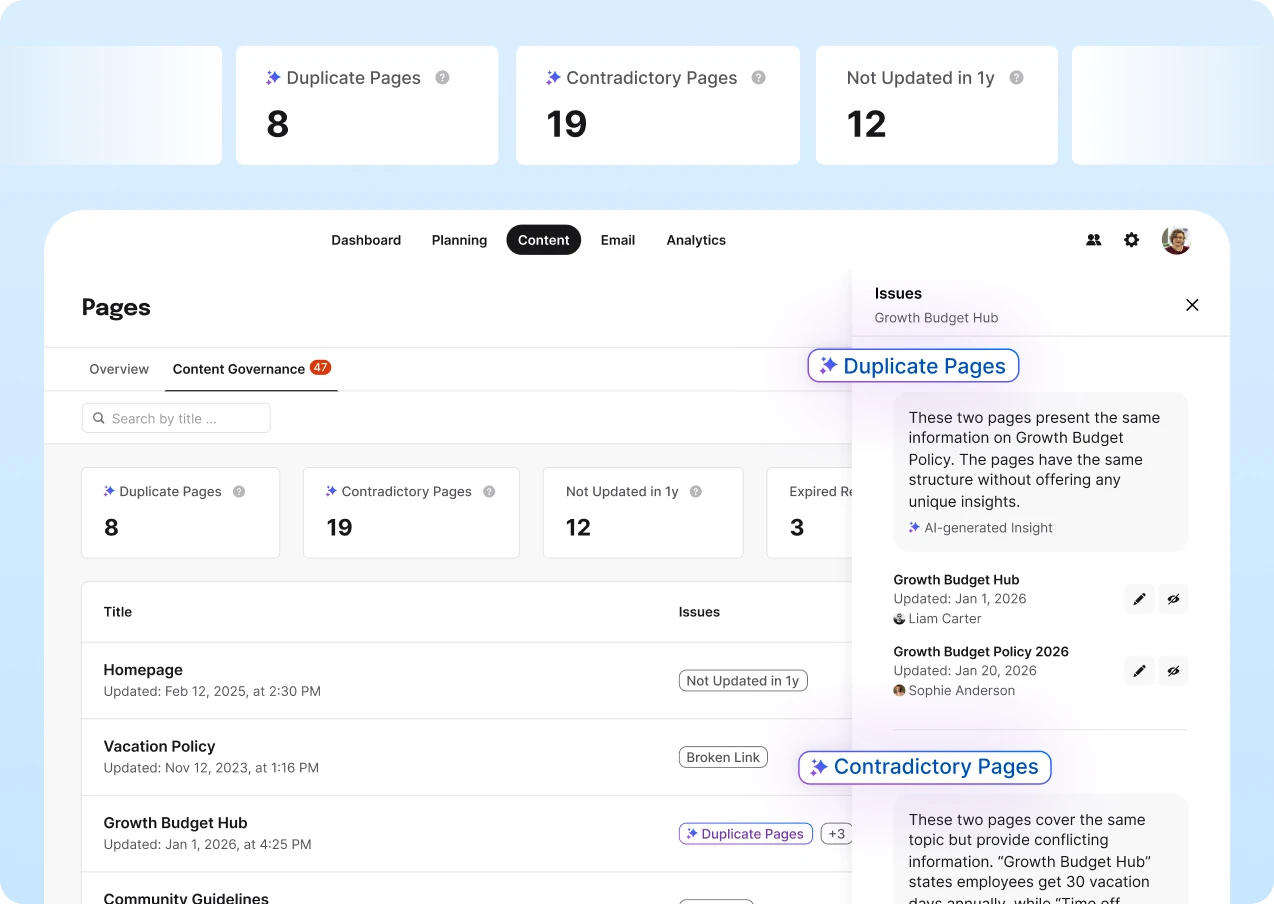

This is where Staffbase’s Content Pro capabilities become part of the AI Quality Layer. The Content Governance tab continuously monitors published pages and surfaces issues that need attention, including:

Pages that haven’t been updated in over a year

Expired review reminders

Content with zero views in the last three months

Broken links

It also uses AI to detect duplicate content and contradictory information across pages within the same space. For each flagged issue, editors get suggested actions directly in their workflow. There’s no separate audit project or spreadsheet tracking. The system surfaces what needs attention and helps editors act on it.

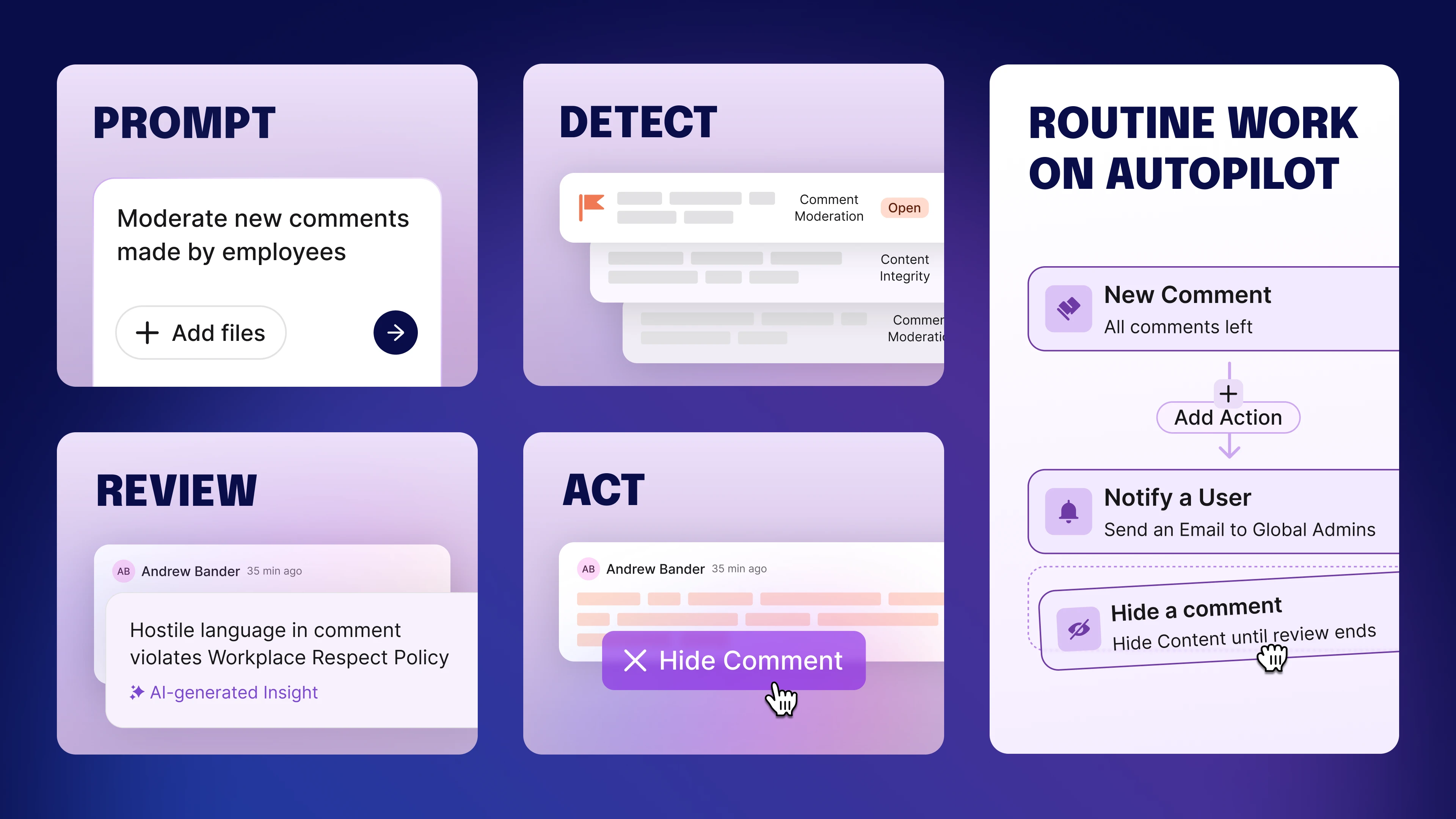

Staffbase’s Autopilot takes this a step further by replacing periodic manual audits with continuous, AI-powered automation running in the background. Autopilot automatically detects content gaps by identifying employee questions with no corresponding answers, flags outdated material that needs reviewing or updating, and monitors analytics for signs that content isn't resonating with employees.

Content Quality is the least glamorous part of the AI Quality Layer, but it’s also the most important. Without it, everything else is built on sand.

5. Learning Loop: How does the system get better over time?

No AI system is perfect at launch. The question is whether it improves — and whether the people responsible for it can see what’s working and what isn’t.

The Learning Loop means every AI interaction generates actionable feedback. Administrators can evaluate Navigator conversations and AI interactions to understand where the system performs well and where it falls short. They can identify patterns such as topics where answers are consistently unhelpful, questions that reveal content gaps, and moments where employees abandon the interaction.

This goes beyond basic usage analytics. It’s governance analytics, the kind ClearBox research identifies as a critical gap in most platforms. Not just page hits and dwell time, but: which content owners need to act? Which pages are generating bad AI answers? Where are employees asking questions that the system can’t handle?

The Learning Loop connects directly to the other four dimensions. If employees in a specific location keep asking about a procedure that isn’t covered, that’s a Content Quality signal. If answers about a policy are technically correct but employees aren’t acting on them, that might be an Organization Voice issue — the answer is right, but not framed in a useful way.

Every question Lena asks — and every question she can’t get answered — teaches the system what’s working and what’s not. Over time, the gap between “almost right” and “actually right” narrows because the organizational intelligence surrounding it gets sharper.

Safe and good for every employee

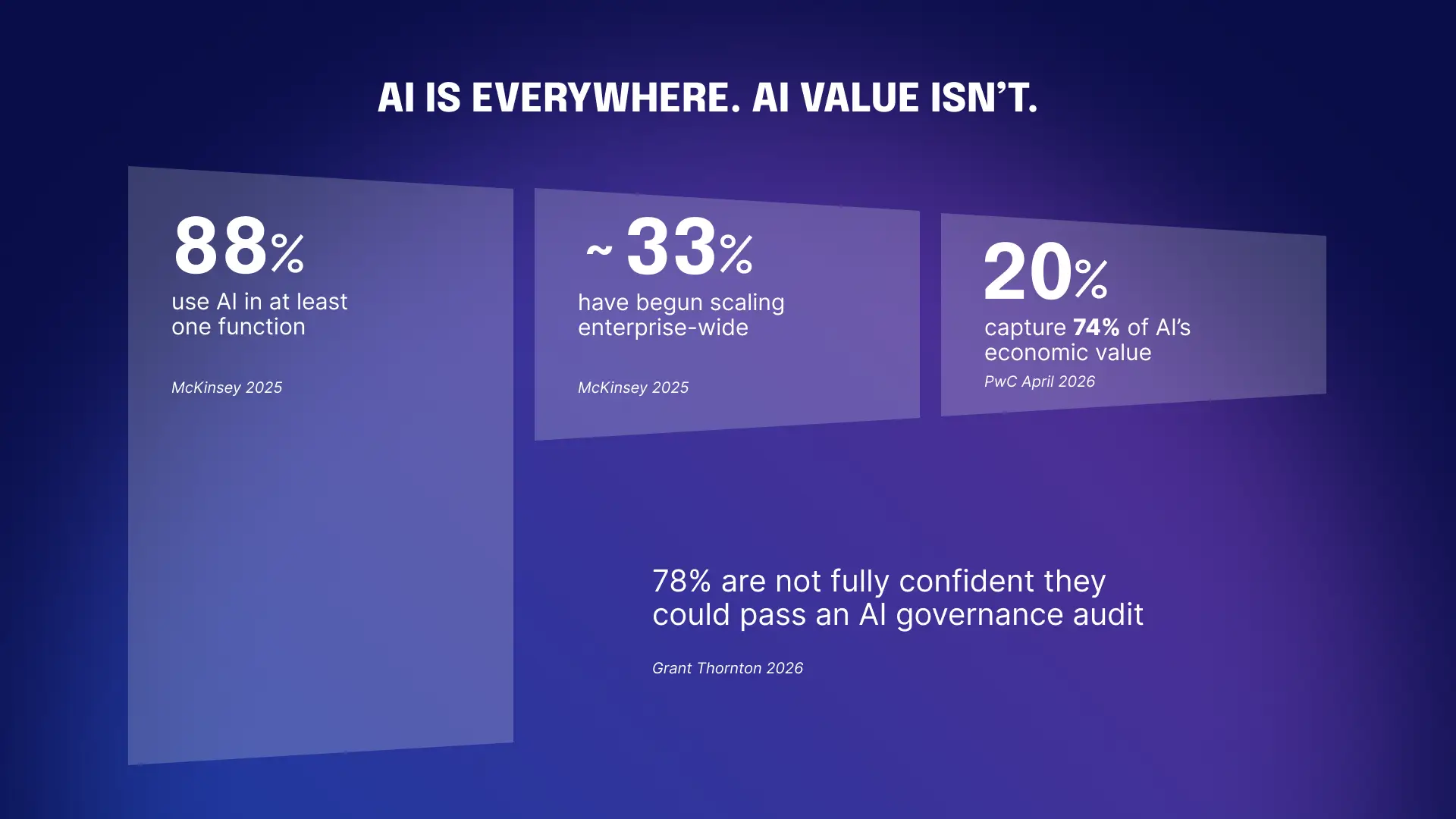

The numbers tell the story. According to McKinsey, 88% of organizations use AI in at least one function in 2025, but only a third have begun scaling it across the business. And PwC numbers show that just 20% are capturing significant economic value from AI in 2026. The bottleneck isn’t the technology. It’s trust.

That gap isn’t closing because models are getting better. Models have been good enough for a while. The gap closes when organizations can trust that everything the AI produces — every answer, every translation, every briefing — meets the same quality standard they’d apply to any other piece of organizational communication.

For eleven years, Staffbase built the last mile of reach — getting information to every employee, everywhere. Now we’re building the last mile of quality: making sure what AI delivers is accurate, contextual, and trustworthy before it reaches anyone.

That’s what the AI Quality Layer delivers. Not safe enough to experiment with. Good enough to deploy. For every employee.

Want to see how the AI Quality Layer works in Staffbase?