Do we still need an intranet if we already use Teams or Slack?

Teams and Slack coordinate work in progress. An intranet defines the official answers that employees and AI can trust. Discover why organizations still need both — and how each tool supports a different stage of the information lifecycle.

TL;DR: Do you need an intranet if you have Teams or Slack?

Yes — but not because intranets and collaboration tools compete. They serve different stages of the information lifecycle, and confusing those stages is where most organizations run into trouble.

Platforms like Teams and Slack manage work while it's still forming: conversations, drafts, decisions in progress. An intranet holds what the organization officially stands behind once those decisions are final, with clear ownership, defined permissions, and review cycles.

That separation matters more in 2026 than it did five years ago. As AI assistants become the first place employees go for answers, they pull from wherever official-looking content lives. If verified guidance isn't stored in a governed system, AI surfaces answers from chat threads, outdated files, and partial context, and delivers confidently wrong answers to employees.

What most advice gets wrong

The common framing is "intranet vs. collaboration tool," as if organizations have to pick one. That's the wrong question. The real question is: Where does information go once a decision is final? If the answer is "wherever it landed in Teams," you don't have a governance problem. You have a trust problem, and AI makes that problem visible faster.

So which do you actually need?

→ Choose an intranet if your primary need is durable, governed knowledge like policies, org-wide updates, and official guidance that employees or AI assistants should be able to rely on. Governance and permissions matter more than speed of delivery.

→ Choose a collaboration tool (Teams, Slack) if your primary need is coordination while work is still in motion, like fast communication, real-time decisions, and documents in draft. These tools are optimized for speed, not permanence.

→ Choose both, with clear boundaries if you run a large or distributed organization. Most do. The intranet handles "what is officially true." The collaboration tool handles "what we're figuring out."

Why one AI can't do both jobs

Most organizations assume a single AI assistant can handle everything — meeting summaries and policy questions, task drafts and official guidance. In practice, these require fundamentally different underlying systems, and conflating them is where AI implementations break down.

There are two distinct types of AI at work in most enterprises:

→ Productivity AI (Teams, Slack, Copilot): Embedded in collaboration tools, optimized for speed. Effective at summarizing meetings, drafting messages, and accelerating daily work. But these systems retrieve from the same environment where work is still forming — duplicate files, evolving drafts, parallel conversations. That makes them unreliable sources for final, authoritative answers.

→ Company/Governed knowledge AI: Grounded in a system of record where every piece of content has a defined owner, a review cycle, and a clear status. When employees ask a question, this system can return a cited, approved answer they can act on — not the most recent thing someone wrote in a chat thread.

The failure mode isn't using both. It's never deciding which system owns what.

Key takeaway: Productivity AI and governed knowledge AI retrieve from fundamentally different environments. That difference determines whether employees get an answer they can act on — or one that needs to be verified before anyone trusts it.

What are enterprise collaboration tools designed to do?

Collaboration tools like Teams and Slack are designed to help teams coordinate work while decisions are still evolving. They prioritize speed and participation over permanence, which makes them excellent for discussion and iteration, but unreliable as a source of final guidance.

In many organizations, these tools sit on top of shared file systems where documents accumulate faster than they're reviewed or retired. Messages are written quickly. Files are shared mid-edit. Guidance shifts in real time as people react, clarify, and adjust.

That environment is exactly right for what these tools are built to do: active coordination, drafts in progress, and productivity AI tasks like summarizing threads or generating first-draft replies from informal context.

The problem isn't the tools. It's treating that environment as a system of record and routing AI-powered answers through it as if every thread and shared file represents final, verified guidance.

What an AI-powered intranet does differently in 2026

An intranet exists to define what information the organization officially stands behind. In an AI-driven workplace, that role expands: the intranet becomes the governed source AI assistants rely on to deliver reliable answers.

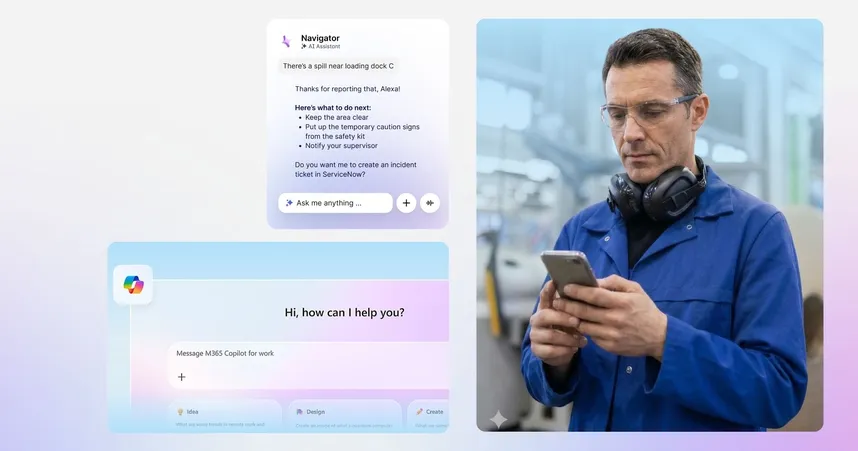

In 2026, an AI-powered intranet shifts from a content repository to an answer layer. Instead of asking employees to search through pages and PDFs, it surfaces clear, cited answers directly. This only works when governance is built in by design: every piece of content has a defined owner, a review cycle, and a permission status before an AI assistant is allowed to retrieve it. Staffbase takes this approach with Staffbase Navigator, which grounds its answers in content that has already been reviewed and approved rather than surfacing whatever is most recent or most visited.

Tool | Role | What it does well | The "Trust Gap" |

|---|---|---|---|

Microsoft teams | Coordination layer | Real-time messaging & tactical coordination | Cannot distinguish between drafted & approved work |

Slack | Collaboration layer | Real-time "pull" comms, information, and knowledge sharing | Lacks the governance required to act as a company trust anchor |

Microsoft SharePoint | Storage layer | Housing raw data and team co-editing documents | Files pile up without clear ownership, AI tools can't tell what's current |

Microsoft Copilot | Productivity layer | Summarizing work in motion, drafting contextual replies | Inherits the noise of structural data, cannot decide what's official |

Staffbase (AI-powered Intranet) | Answer layer | Providing a governed source of truth that reaches all employees | Bridging the trust gap: the place for publishing the outcome once it's official |

How to assign collaboration tools and intranets to the right roles

The most effective organizations don't reduce their toolset; they define clear jobs for each. The distinction matters most once AI is involved: when an employee asks a chatbot a question, they don't want a list of possibilities; they expect the answer. If your system of record contains conflicting versions of a policy, AI will blend drafts with final versions and respond with confidence, even when it's wrong. Employees aren't browsing anymore; they're delegating judgment. That's why which system owns what is no longer an IT question — it's a trust question.

The table below shows how that split works in practice.

Collaboration tools | AI-powered intranet | |

|---|---|---|

When it's used | Work in motion, decisions still forming | Work that's final and safe to act on |

Governance | Content survives by momentum | Content survives by verification |

Reach | Optimized for desk-based, licensed users | Designed for zero-barrier frontline reach |

In Staffbase, governance is built in by design. Content cannot exist without an owner, review cycles are mandatory, and expired information is flagged or removed before it's surfaced to employees or AI.

How AI shapes the frontline communication gap in 2026

The limits of collaboration tools become visible fastest on the frontline. Most collaboration platforms are designed for desk-based employees with email access, licenses, and time to scroll for context. Frontline workers need answers they can trust through channels they can actually access, without searching chat history, logging into multiple tools, or guessing which message is still valid.

Data shows the frontline communication gap. In the 2025 International Employee Communication Impact Study by Staffbase and YouGov, only 29% of non-desk workers said they’re “very” or “rather” satisfied with the quality of internal communication. Among desk-based employees, that number rises to 47%. The difference isn’t motivation or engagement — it’s access to clear, reliable information.

Research from Gartner reinforces the pattern. 38% of employees report receiving too much communication, and 33% say the information they receive is inconsistent or conflicting. That environment is already difficult to navigate. When AI operates inside it without governance, it doesn't help employees find the right answer faster — it surfaces more answers, with equal confidence, from a system that was never designed to distinguish drafts from decisions.

The bottom line: For frontline workers with limited time and no fallback channel, a wrong answer isn't a minor friction point. It's a shift missed, a process done incorrectly, or a compliance risk acted on.

The operating model that high-performing organizations use in 2026

In organizations that get this right, the boundary between work in motion and work that is trusted is absolute. A project team uses a messaging tool like Teams to iterate on a new compliance process, debating trade-offs in real time. Once finalized, that process is graduated to the intranet, where it is assigned an owner and a review cycle.

This allows employees to move fast during the decision-making phase while ensuring that when they need an answer later, they aren't sifting through chat history. They're receiving verified guidance from the system of record — and that's what allows AI-powered company chatbots like Staffbase Navigator to return accurate, cited answers with confidence.

Over time, that consistency becomes a signal: when the company publishes something on the intranet, it can be trusted. That trust isn't built through messaging volume or reminders. It's earned through operational usefulness.

How this works in practice: WSW

Wuppertaler Stadtwerke (WSW) faced a workforce split between desk workers and blue-collar employees. To bridge the gap, they launched a Staffbase-powered intranet and app called WSW.Punkt.

Instead of leading with communication, they led with utility. Digital payslips, vacation balances, and sick note submission were built directly into the app, giving bus drivers and technicians a reason to open it every day. That daily habit created the conditions for strategic messages to actually get read. The platform reached a registration rate of nearly 90%, with monthly active usage between 80 and 85%.

When is an AI-powered intranet the wrong investment?

An AI-powered intranet is a structural decision about trust, governance, and how answers travel through your organization. It's not the right move for every situation.

It's likely the wrong investment if:

There's no shared agreement on which information is official

Content ownership and review cycles are undefined

You're a smaller organization where direct communication resolves questions faster than a governed system would

In these environments, adding AI introduces overhead without improving clarity. The tool only delivers value when your organization is ready to be explicit about which information should remain dependable over time.

How to align stakeholders when the organization is ready

The question isn't whether to use AI. It's whether each stakeholder's core requirements are met before scaling it.

Stakeholder | Core concern | How governed AI addresses it |

|---|---|---|

IT and security | AI surfacing unverified content increases security exposure | Content ownership, access rights, and review cycles are enforced at the source |

Internal comms | Without governance, AI amplifies inconsistent or outdated guidance | Only official, owned, and reviewed content is eligible for AI answers |

HR and compliance | AI exposes gaps or contradictions in existing policies | Policies are standardized and governed before AI is introduced |

Why organizations trust Staffbase with this decision

Most organizations don't have a content problem. They have a clarity problem: too much information, too little agreement on what's official, and AI systems that can't tell the difference.

Staffbase is built around that specific constraint. The intranet, mobile app, and email channel share a single governance layer, so the same content standards apply whether an employee is reading a policy on a desktop or asking Staffbase Navigator a question on a factory floor. That consistency is what makes AI reliable rather than risky.

For organizations evaluating this decision, the right question isn't "which tool has the best AI features?" It's "which system can we actually trust to govern what AI is allowed to say?"

If you're ready to define that answer for your organization, we're happy to show you how it works in practice.

Validity Note: This article reflects the Staffbase POV and enterprise market conditions as of April 2026.

Frequently asked questions (FAQs)

As AI becomes the first place employees go for answers, these FAQs clarify what intranets and collaboration tools can — and cannot — be trusted to do in 2026.